初学者用scrapy框架. 遇到了问题

初学scrapy框架. 遇到了问题。哪位给指点指点

1、爬虫文件:

import scrapy

from ..items import MediaproItem

class ImgSpider(scrapy.Spider):

name = "img"

# allowed_domains = ["www.xxx.com"]

start_urls = ["https://pic.netbian.com/4kmeinv/"]

def parse(self, response):

img_list = response.xpath('//*[@id="main"]/div[3]/ul/li')

for list in img_list:

img_url = 'https://pic.netbian.com/'+list.xpath('./a/img/@src').extract_first()

# print(img_url)

item = MediaproItem()

item["img_url"] = img_url

yield item

2、item.py文件代码如下:

import scrapy

class MediaproItem(scrapy.Item):

img_url = scrapy.Field()

3、pipelines.py文件代码如下:

import scrapy

from scrapy.pipelines.images import ImagesPipeline

#自定义的管道类一定要继承与ImagesPipeline

class mediaPileline(ImagesPipeline):

def get_media_requests(self, item, info):

img_src = item['img_url']

print(img_src,'开始下载')

yield scrapy.Request(img_src)

#指定图片的名称(只需要返回图片存储的名称即可)

def file_path(self, request, response=None, info=None, *, item=None):

imgName = request.url.split('/')[-1]

print(imgName,'下载保存成功!')

return imgName

4、settings.py文件代码如下

```python

ROBOTSTXT_OBEY = False

LOG_LEVEL = 'DEBUG'

USER_AGENT = "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/113.0.0.0 Safari/537.36"

ITEM_PIPELINES = {

"mediapro.pipelines.mediaPileline": 300,

}

IMAGES_STORE = 'D:\py\girlsLib'

5、输出结果

PS D:\py\mediapro> scrapy crawl img

2023-06-14 23:16:44 [scrapy.utils.log] INFO: Scrapy 2.8.0 started (bot: mediapro)

2023-06-14 23:16:44 [scrapy.utils.log] INFO: Versions: lxml 4.9.2.0, libxml2 2.9.12, cssselect 1.2.0, parsel 1.8.1, w3lib 2.1.1, Twisted 22.10.0, Python 3.10.10 (tags/v3.10.10:aad5f6a, Feb 7 2023, 17:20:36) [MSC v.1929 64 bit (AMD64)], pyOpenSSL 23.1.1 (OpenSSL 3.1.0 14 Mar 2023), cryptography 40.0.2, Platform Windows-10-10.0.22621-SP0

2023-06-14 23:16:44 [scrapy.crawler] INFO: Overridden settings:

{'BOT_NAME': 'mediapro',

'FEED_EXPORT_ENCODING': 'utf-8',

'NEWSPIDER_MODULE': 'mediapro.spiders',

'REQUEST_FINGERPRINTER_IMPLEMENTATION': '2.7',

'SPIDER_MODULES': ['mediapro.spiders'],

'TWISTED_REACTOR': 'twisted.internet.asyncioreactor.AsyncioSelectorReactor',

'USER_AGENT': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 '

'(KHTML, like Gecko) Chrome/113.0.0.0 Safari/537.36'}

2023-06-14 23:16:44 [asyncio] DEBUG: Using selector: SelectSelector

2023-06-14 23:16:44 [scrapy.utils.log] DEBUG: Using reactor: twisted.internet.asyncioreactor.AsyncioSelectorReactor

2023-06-14 23:16:44 [scrapy.utils.log] DEBUG: Using asyncio event loop: asyncio.windows_events._WindowsSelectorEventLoop

2023-06-14 23:16:44 [scrapy.extensions.telnet] INFO: Telnet Password: 28dc3797c3f44a85

2023-06-14 23:16:44 [scrapy.middleware] INFO: Enabled extensions:

['scrapy.extensions.corestats.CoreStats',

'scrapy.extensions.telnet.TelnetConsole',

'scrapy.extensions.logstats.LogStats']

2023-06-14 23:16:45 [scrapy.middleware] INFO: Enabled downloader middlewares:

['scrapy.downloadermiddlewares.httpauth.HttpAuthMiddleware',

'scrapy.downloadermiddlewares.downloadtimeout.DownloadTimeoutMiddleware',

'scrapy.downloadermiddlewares.defaultheaders.DefaultHeadersMiddleware',

'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware',

'scrapy.downloadermiddlewares.retry.RetryMiddleware',

'scrapy.downloadermiddlewares.redirect.MetaRefreshMiddleware',

'scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware',

'scrapy.downloadermiddlewares.redirect.RedirectMiddleware',

'scrapy.downloadermiddlewares.cookies.CookiesMiddleware',

'scrapy.downloadermiddlewares.httpproxy.HttpProxyMiddleware',

'scrapy.downloadermiddlewares.stats.DownloaderStats']

2023-06-14 23:16:45 [scrapy.middleware] INFO: Enabled spider middlewares:

['scrapy.spidermiddlewares.httperror.HttpErrorMiddleware',

'scrapy.spidermiddlewares.offsite.OffsiteMiddleware',

'scrapy.spidermiddlewares.referer.RefererMiddleware',

'scrapy.spidermiddlewares.urllength.UrlLengthMiddleware',

'scrapy.spidermiddlewares.depth.DepthMiddleware']

2023-06-14 23:16:45 [scrapy.middleware] WARNING: Disabled mediaPileline: ImagesPipeline requires installing Pillow 4.0.0 or later

2023-06-14 23:16:45 [scrapy.middleware] INFO: Enabled item pipelines:

[]

2023-06-14 23:16:45 [scrapy.core.engine] INFO: Spider opened

2023-06-14 23:16:45 [scrapy.extensions.logstats] INFO: Crawled 0 pages (at 0 pages/min), scraped 0 items (at 0 items/min)

2023-06-14 23:16:45 [scrapy.extensions.telnet] INFO: Telnet console listening on 127.0.0.1:6023

2023-06-14 23:16:45 [scrapy.core.engine] DEBUG: Crawled (200) <GET https://pic.netbian.com/4kmeinv/> (referer: None)

2023-06-14 23:16:45 [py.warnings] WARNING: C:\Python\Python310\lib\site-packages\scrapy\selector\unified.py:83: UserWarning: Selector got both text and root, root is being ignored.

super().__init__(text=text, type=st, root=root, **kwargs)

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/230614/004222-1686674542bb44.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/230614/004055-168667445520a2.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/230609/235756-168632627692da.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/230609/235121-168632588196df.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/230608/000058-1686153658907f.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/221216/095854-1671155934fe32.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/230224/131035-1677215435a04e.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/230209/002530-1675873530bece.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/230428/002509-16826127097cad.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/220331/002729-1648657649c9dd.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/210718/001826-16265387066216.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/210831/102129-16303764895142.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/220823/000930-1661184570f815.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/220131/012219-16435633391d32.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/220205/002942-1643992182534d.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/230518/002935-16843409759b77.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/230516/194258-16842373781c8b.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/230513/002500-1683908700a26d.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/230531/002316-16854637964fdb.jpg'}

2023-06-14 23:16:46 [scrapy.core.scraper] DEBUG: Scraped from <200 https://pic.netbian.com/4kmeinv/>

{'img_url': 'https://pic.netbian.com//uploads/allimg/211219/114328-1639885408db64.jpg'}

2023-06-14 23:16:46 [scrapy.core.engine] INFO: Closing spider (finished)

2023-06-14 23:16:46 [scrapy.statscollectors] INFO: Dumping Scrapy stats:

{'downloader/request_bytes': 300,

'downloader/request_count': 1,

'downloader/request_method_count/GET': 1,

'downloader/response_bytes': 4239,

'downloader/response_count': 1,

'downloader/response_status_count/200': 1,

'elapsed_time_seconds': 0.405969,

'finish_reason': 'finished',

'finish_time': datetime.datetime(2023, 6, 14, 15, 16, 46, 18015),

'httpcompression/response_bytes': 11515,

'httpcompression/response_count': 1,

'item_scraped_count': 20,

'log_count/DEBUG': 24,

'log_count/INFO': 10,

'log_count/WARNING': 2,

'response_received_count': 1,

'scheduler/dequeued': 1,

'scheduler/dequeued/memory': 1,

'scheduler/enqueued': 1,

'scheduler/enqueued/memory': 1,

'start_time': datetime.datetime(2023, 6, 14, 15, 16, 45, 612046)}

2023-06-14 23:16:46 [scrapy.core.engine] INFO: Spider closed (finished)

PS D:\py\mediapro>

没有语法错误,只解析出了图片链接,输出日志里2023-06-14 23:16:45 [scrapy.middleware] INFO: Enabled item pipelines:

[] 管道文件没有运行。真心不知道为什么。检查了N多遍~

可以尝试在配置文件中添加以下配置:

ITEM_PIPELINES = {

"mediapro.pipelines.mediaPileline": 300,

'scrapy.pipelines.images.ImagesPipeline': 1,

}

IMAGES_STORE = 'girlsLib'

然后在pipelines.py中修改mediaPileline类的item_completed方法:

def item_completed(self, results, item, info):

image_paths = [x['path'] for ok, x in results if ok]

if not image_paths:

raise DropItem("Item contains no images")

item['image_paths'] = image_paths

return item

这样就可以将下载成功的图片路径保存到item中了。

根据您提供的代码,看起来您的配置、爬虫和管道实现是正确的。确保以下几点:

确保

mediapro.pipelines模块中的mediaPileline类正确导入。确保Scrapy项目的结构正确。请确保

mediapro.pipelines和mediapro.items模块位于正确的目录下,并且在settings.py中正确指定了ITEM_PIPELINES和IMAGES_STORE。检查

IMAGES_STORE设置是否正确,确保指定的目录存在并且具有正确的读写权限。在您的配置文件中,IMAGES_STORE被设置为girlsLib,请确保该目录存在并且可写。确保您使用了正确的Scrapy命令来运行爬虫。请尝试使用以下命令启动爬虫:

scrapy crawl imgimg是您定义的爬虫的名称。

如果您仍然无法下载图片,请检查运行Scrapy时的日志输出,查找与图像下载和管道处理相关的任何错误信息。您也可以尝试将日志级别设置为更高的级别(例如LOG_LEVEL = 'DEBUG')以获取更详细的日志信息。

如果问题仍然存在,请提供更多的日志信息或任何其他错误消息,以便我可以更好地帮助您解决问题。

- 给你找了一篇非常好的博客,你可以看看是否有帮助,链接:Python爬虫5.2 — scrapy框架pipeline模块的使用

- 除此之外, 这篇博客: 爬虫框架scrapy中的 3. 保存这些数据:完善pipelines.py文件 部分也许能够解决你的问题, 你可以仔细阅读以下内容或跳转源博客中阅读:

首先先写成最简单的

class MyspiderPipeline(object): def process_item(self, item, spider): # 默认传过来的item是json格式 with open('mooc.json','a') as f: f.write(str(item)+'--\n') # 一定要加, 返回给调度器; return item修改配置文件settings.py中下图的三行注释去掉

运行结果:

下面是写了三种,分别是保存在csv,json,数据库中的写法,你可以只写一种,或者写你自己想保存的方式,

也可几种都写,但是要修改配置文件settings.py修改他们的优先级( 0~1000, 数字越小, 优先级越高)保存在json文件中

class MyspiderPipeline(object): """将爬取的信息保存为Json格式""" def __init__(self): # 这样写就不会每次保存的时候覆盖上一次了 self.f = open('mooc.json', 'w') def process_item(self, item, spider): # 默认传过来的item是json格式 import json # 读取item中的数据, 并转成json格式; line = json.dumps(dict(item), ensure_ascii=False, indent=4) self.f.write(line + '\n') # 一定要加, 返回给调度器; return item def open_spider(self, spider): """开启爬虫时执行的函数""" pass def close_spider(self, spider): """当爬虫全部爬取结束的时候执行的函数""" self.f.close()保存在csv

class CsvPipeline(object): """将爬取的信息保存为csv格式""" def __init__(self): self.f = open('mooc.csv', 'w') def process_item(self, item, spider): self.f.write(str(item)+'\n') # item = dict(item) # self.f.write("{0}:{1}:{1}\n".format(item['title'], item['student'], item['url'])) # 一定要加, 返回给调度为器; return item def open_spider(self, spider): """开启爬虫时执行的函数""" pass def close_spider(self, spider): """当爬虫全部爬取结束的时候执行的函数""" self.f.close()保存在数据库

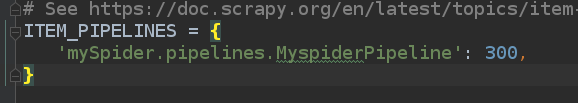

import pymysql class MysqlPipeline(object): """ 将爬取的信息保存到数据库中 1. 创建mooc数据库 """ def __init__(self): super(MysqlPipeline, self).__init__() self.conn = pymysql.connect( host='localhost', user='root', password='123456',#自己的 db='Mooc', charset='utf8', ) self.cursor = self.conn.cursor() def process_item(self, item, spider): # item是一个对象, item = dict(item) info = (item['title'], item['url'], item['image_url'], item['introduction'], item['student']) insert_sqli = "insert into moocinfo values('%s', '%s', '%s', '%s', '%s'); " %(info) self.cursor.execute(insert_sqli) self.conn.commit() return item def open_spider(self, spider): """开启爬虫时执行的函数""" create_sqli = "create table if not exists moocinfo (title varchar(50), url varchar(200), image_url varchar(200), introduction varchar(500), student int)" self.cursor.execute(create_sqli) def close_spider(self, spider): """当爬虫全部爬取结束的时候执行的函数""" self.cursor.close() self.conn.close()修改配置文件settings.py中他们的优先级如下图

可以自己运行查看结果,这里就不再赘述

Scrapy框架快速入门

可以深入点

https://blog.csdn.net/qq_44907926/article/details/119531324