GAN训练效果出现问题

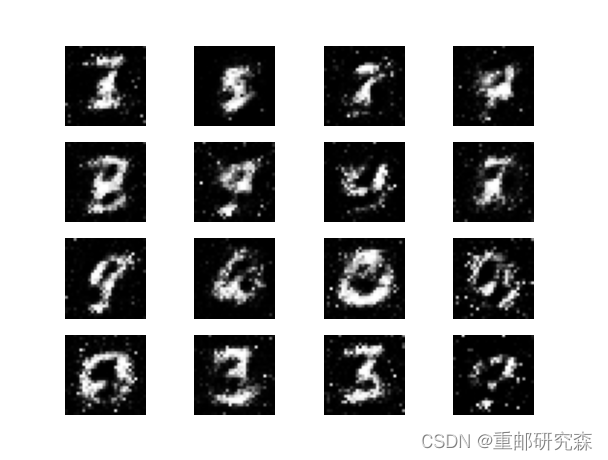

网上现有的GAN实现手写数字生成的代码,代码未做任何改动,在自己电脑上面运行的效果却很差。这是什么原因呢?开始训练还算正常,后面就出现了下面的问题。正常是可以计算下去的。

图片逐渐失真

代码就是下面网上常见的代码。

```python

from __future__ import print_function, division

from keras.datasets import mnist

from keras.layers import Input, Dense, Reshape, Flatten, Dropout

from keras.layers import BatchNormalization, Activation, ZeroPadding2D

from keras.layers.advanced_activations import LeakyReLU

from keras.layers.convolutional import UpSampling2D, Conv2D

from keras.models import Sequential, Model

from tensorflow.keras.optimizers import Adam

import matplotlib.pyplot as plt

import numpy as np

class DCGAN():

def __init__(self):

self.img_rows = 28

self.img_cols = 28

self.channels = 1

self.img_shape = (self.img_rows, self.img_cols, self.channels)

self.latent_dim = 100

optimizer = Adam(0.0002)

self.discriminator = self.build_discriminator()

self.discriminator.compile(loss='binary_crossentropy',

optimizer=optimizer,

metrics=['accuracy'])

self.generator = self.build_generator()

z = Input(shape=(self.latent_dim,))

img = self.generator(z)

self.discriminator.trainable = False

valid = self.discriminator(img)

self.combined = Model(z, valid)

self.combined.compile(loss='binary_crossentropy', optimizer=optimizer)

def build_generator(self):

model = Sequential()

model.add(Dense(128 * 7 * 7, activation="relu", input_dim=self.latent_dim))

model.add(Reshape((7, 7, 128)))

model.add(UpSampling2D())

model.add(Conv2D(128, kernel_size=3, padding="same"))

model.add(BatchNormalization(momentum=0.8))

model.add(Activation("relu"))

model.add(UpSampling2D())

model.add(Conv2D(64, kernel_size=3, padding="same"))

model.add(BatchNormalization(momentum=0.8))

model.add(Activation("relu"))

model.add(Conv2D(self.channels, kernel_size=3, padding="same"))

model.add(Activation("tanh"))

model.summary()

noise = Input(shape=(self.latent_dim,))

img = model(noise)

return Model(noise, img)

def build_discriminator(self):

model = Sequential()

model.add(Conv2D(32, kernel_size=3, strides=2, input_shape=self.img_shape, padding="same"))

model.add(LeakyReLU(alpha=0.2))

model.add(Dropout(0.25))

model.add(Conv2D(64, kernel_size=3, strides=2, padding="same"))

model.add(ZeroPadding2D(padding=((0,1),(0,1))))

model.add(BatchNormalization(momentum=0.8))

model.add(LeakyReLU(alpha=0.2))

model.add(Dropout(0.25))

model.add(Conv2D(128, kernel_size=3, strides=2, padding="same"))

model.add(BatchNormalization(momentum=0.8))

model.add(LeakyReLU(alpha=0.2))

model.add(Dropout(0.25))

model.add(Conv2D(256, kernel_size=3, strides=1, padding="same"))

model.add(BatchNormalization(momentum=0.8))

model.add(LeakyReLU(alpha=0.2))

model.add(Dropout(0.25))

model.add(Flatten())

model.add(Dense(1, activation='sigmoid'))

model.summary()

img = Input(shape=self.img_shape)

validity = model(img)

return Model(img, validity)

def train(self, iter, batch_size=128, save_interval=50):

(X_train, _), (_, _) = mnist.load_data()

X_train = X_train / 127.5 - 1.

X_train = np.expand_dims(X_train, axis=3)

valid = np.ones((batch_size, 1))

fake = np.zeros((batch_size, 1))

for i in range(iter):

idx = np.random.randint(0, X_train.shape[0], batch_size)

imgs = X_train[idx]

noise = np.random.normal(0, 1, (batch_size, self.latent_dim))

gen_imgs = self.generator.predict(noise)

d_loss_real = self.discriminator.train_on_batch(imgs, valid)

d_loss_fake = self.discriminator.train_on_batch(gen_imgs, fake)

d_loss = 0.5 * np.add(d_loss_real, d_loss_fake)

g_loss = self.combined.train_on_batch(noise, valid)

print ("%d [D loss: %f, acc.: %.2f%%] [G loss: %f]" % (i, d_loss[0], 100*d_loss[1], g_loss))

if i % save_interval == 0:

self.save_imgs(i)

def save_imgs(self, iter):

r, c = 5, 5

noise = np.random.normal(0, 1, (r * c, self.latent_dim))

gen_imgs = self.generator.predict(noise)

gen_imgs = 0.5 * gen_imgs + 0.5

fig, axs = plt.subplots(r, c)

cnt = 0

for i in range(r):

for j in range(c):

axs[i,j].imshow(gen_imgs[cnt, :,:,0], cmap='gray')

axs[i,j].axis('off')

cnt += 1

fig.savefig("n/mnist_%d.png" % iter)

plt.close()

if __name__ == '__main__':

dcgan = DCGAN()

dcgan.train(iter=10000, batch_size=32, save_interval=50)

从你的图片来看,过拟合了应该,所以越来越差了

你可以将这几个返回值保存依赖,然后绘制一下图片看下

- 建议你看下这篇博客👉 :GAN生成对抗网络

- 除此之外, 这篇博客: 对抗网络(GAN)手写数字生成中的 1.跑通代码 部分也许能够解决你的问题, 你可以仔细阅读以下内容或跳转源博客中阅读:

我这个人对于任何代码,我都会先去跑通之和才会去观看内容,哈哈哈,所以第一步我们先不管37=21,直接把博主的代码复制黏贴一份运行结果。(PS:做了一些修改,因为原文是jupyter,而我在pycharm)

import tensorflow as tf gpus = tf.config.list_physical_devices("GPU") if gpus: tf.config.experimental.set_memory_growth(gpus[0], True) # 设置GPU显存用量按需使用 tf.config.set_visible_devices([gpus[0]], "GPU") # 打印显卡信息,确认GPU可用 print(gpus) from tensorflow.keras import layers, datasets, Sequential, Model, optimizers from tensorflow.keras.layers import LeakyReLU, UpSampling2D, Conv2D import matplotlib.pyplot as plt import numpy as np import sys,os,pathlib img_shape = (28, 28, 1) latent_dim = 200 def build_generator(): # ======================================= # # 生成器,输入一串随机数字生成图片 # ======================================= # model = Sequential([ layers.Dense(256, input_dim=latent_dim), layers.LeakyReLU(alpha=0.2), # 高级一点的激活函数 layers.BatchNormalization(momentum=0.8), # BN 归一化 layers.Dense(512), layers.LeakyReLU(alpha=0.2), layers.BatchNormalization(momentum=0.8), layers.Dense(1024), layers.LeakyReLU(alpha=0.2), layers.BatchNormalization(momentum=0.8), layers.Dense(np.prod(img_shape), activation='tanh'), layers.Reshape(img_shape) ]) noise = layers.Input(shape=(latent_dim,)) img = model(noise) return Model(noise, img) def build_discriminator(): # ===================================== # # 鉴别器,对输入的图片进行判别真假 # ===================================== # model = Sequential([ layers.Flatten(input_shape=img_shape), layers.Dense(512), layers.LeakyReLU(alpha=0.2), layers.Dense(256), layers.LeakyReLU(alpha=0.2), layers.Dense(1, activation='sigmoid') ]) img = layers.Input(shape=img_shape) validity = model(img) return Model(img, validity) # 创建判别器 discriminator = build_discriminator() # 定义优化器 optimizer = tf.keras.optimizers.Adam(1e-4) discriminator.compile(loss='binary_crossentropy', optimizer=optimizer, metrics=['accuracy']) # 创建生成器 generator = build_generator() gan_input = layers.Input(shape=(latent_dim,)) img = generator(gan_input) # 在训练generate的时候不训练discriminator discriminator.trainable = False # 对生成的假图片进行预测 validity = discriminator(img) combined = Model(gan_input, validity) combined.compile(loss='binary_crossentropy', optimizer=optimizer) def sample_images(epoch): """ 保存样例图片 """ row, col = 4, 4 noise = np.random.normal(0, 1, (row*col, latent_dim)) gen_imgs = generator.predict(noise) fig, axs = plt.subplots(row, col) cnt = 0 for i in range(row): for j in range(col): axs[i,j].imshow(gen_imgs[cnt, :,:,0], cmap='gray') axs[i,j].axis('off') cnt += 1 fig.savefig("images/%05d.png" % epoch) # fig.savefig(" E:/2021_Project_YanYiXia/AI/21/对抗网络(GAN)手写数字生成/images/%05d.png" % epoch) plt.close() def train(epochs, batch_size=128, sample_interval=50): # 加载数据 (train_images, _), (_, _) = tf.keras.datasets.mnist.load_data() # 将图片标准化到 [-1, 1] 区间内 train_images = (train_images - 127.5) / 127.5 # 数据 train_images = np.expand_dims(train_images, axis=3) # 创建标签 true = np.ones((batch_size, 1)) fake = np.zeros((batch_size, 1)) # 进行循环训练 for epoch in range(epochs): # 随机选择 batch_size 张图片 idx = np.random.randint(0, train_images.shape[0], batch_size) imgs = train_images[idx] # 生成噪音 noise = np.random.normal(0, 1, (batch_size, latent_dim)) # 生成器通过噪音生成图片,gen_imgs的shape为:(128, 28, 28, 1) gen_imgs = generator.predict(noise) # 训练鉴别器 d_loss_true = discriminator.train_on_batch(imgs, true) d_loss_fake = discriminator.train_on_batch(gen_imgs, fake) # 返回loss值 d_loss = 0.5 * np.add(d_loss_true, d_loss_fake) # 训练生成器 noise = np.random.normal(0, 1, (batch_size, latent_dim)) g_loss = combined.train_on_batch(noise, true) print("%d [D loss: %f, acc.: %.2f%%] [G loss: %f]" % (epoch, d_loss[0], 100 * d_loss[1], g_loss)) # 保存样例图片 if epoch % sample_interval == 0: sample_images(epoch) #train(epochs=30000, batch_size=256, sample_interval=200) import imageio def compose_gif(): # 图片地址 data_dir = "E:/2021_Project_YanYiXia/AI/21/对抗网络(GAN)手写数字生成/images" data_dir = pathlib.Path(data_dir) paths = list(data_dir.glob('*')) gif_images = [] for path in paths: print(path) gif_images.append(imageio.imread(path)) imageio.mimsave("test.gif", gif_images, fps=2) compose_gif()点击pycharm运行即可得到结果,此图为对抗网络生成的手写数字

- 您还可以看一下 CSDN讲师老师的详解GAN在黑白照片上色中的应用课程中的 详解GAN在黑白照片上色中的应用-下小节, 巩固相关知识点